Software Consulting & Development

FULL-STACK ENGINEERING & ARCHITECTURE

We build custom software for immersive installations and digital experiences - web applications, real-time graphics engines, interactive kiosks, sensor-driven experiences, and the show-control tools that run live events.

- Lead time

- 4 – 16 weeks

- Stack

- React · Node · Three.js · Unity

- Platforms

- Web · installation · mobile

- Support

- Ongoing available

Engineering that powers immersive experiences - and everything around them.

Our software team builds the platforms, tools, and integrations that bring creative visions to life. Whether it's a real-time GPU shader pipeline for generative visuals, a full-stack web application for a client's business, or the control systems behind a multi-projector installation - we architect solutions that are robust, scalable, and tailored to the project.

We've built everything from e-commerce platforms and interactive museum kiosks to real-time dome simulation tools and data-driven visualizations for NASA. Our approach is hands-on and collaborative - we work directly with stakeholders to understand the problem, design the architecture, and ship production-ready code.

Web Applications

Full-stack web apps, platforms, and dashboards - from concept to deployment. React, Node, Astro, and beyond.

Real-Time Graphics

GPU shader pipelines, generative visual engines, and real-time rendering for installations and live performance.

Systems Architecture

Infrastructure design, API development, database architecture, and DevOps for projects of any scale.

Interactive Installations

Software for sensor-driven experiences, touch interfaces, projection control systems, and museum interactives.

Selected Projects

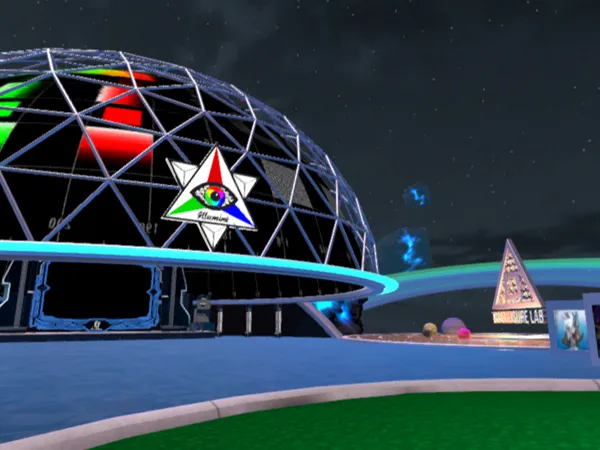

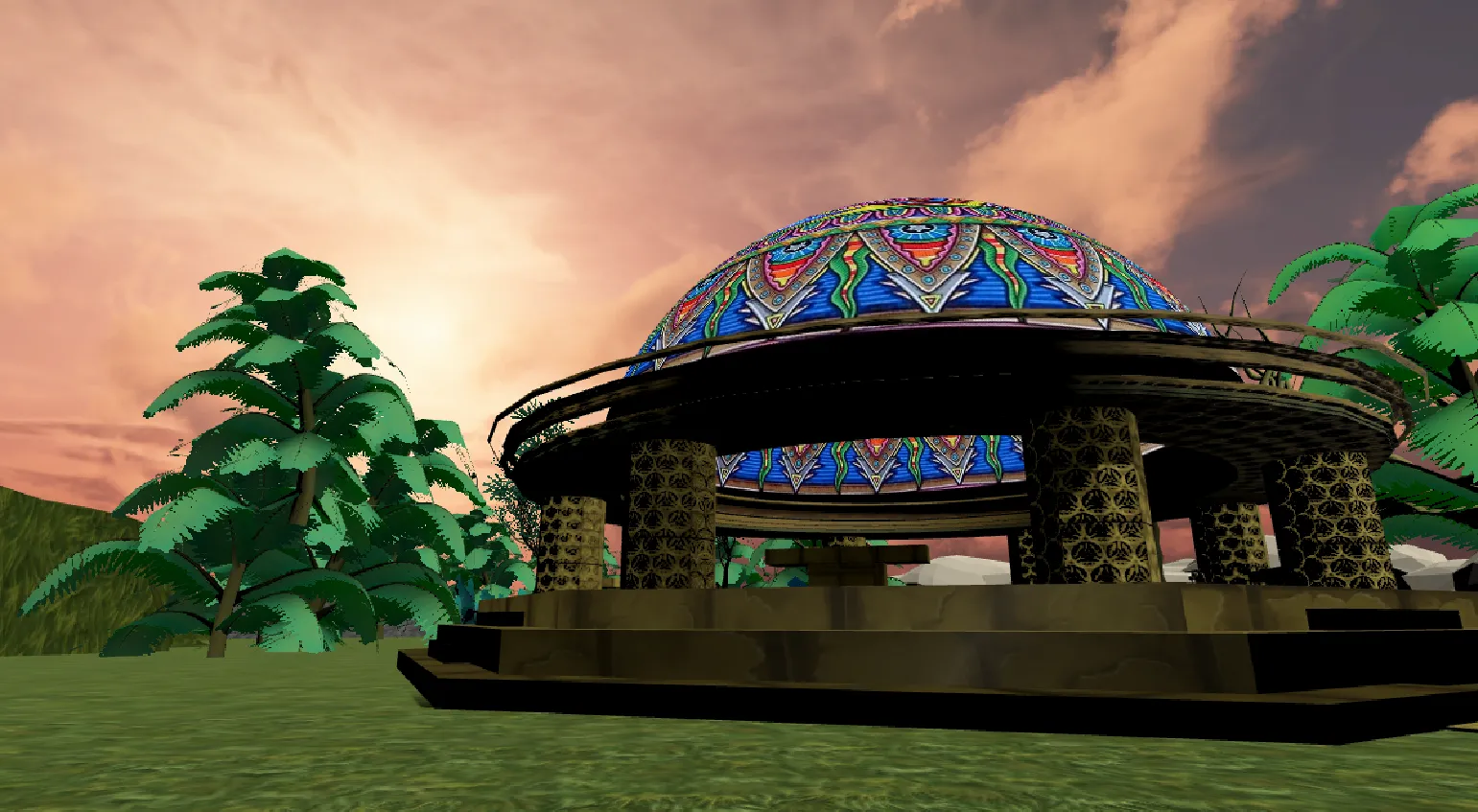

VR DOME PARTIES

VIRTUAL EVENTS

MIRAO

INSTALLATION

TELAVAVISION

FESTIVAL

Food for Thought

MUSEUM INSTALLATION

Red Bull BC One Cypher Minneapolis

LIVE EVENT

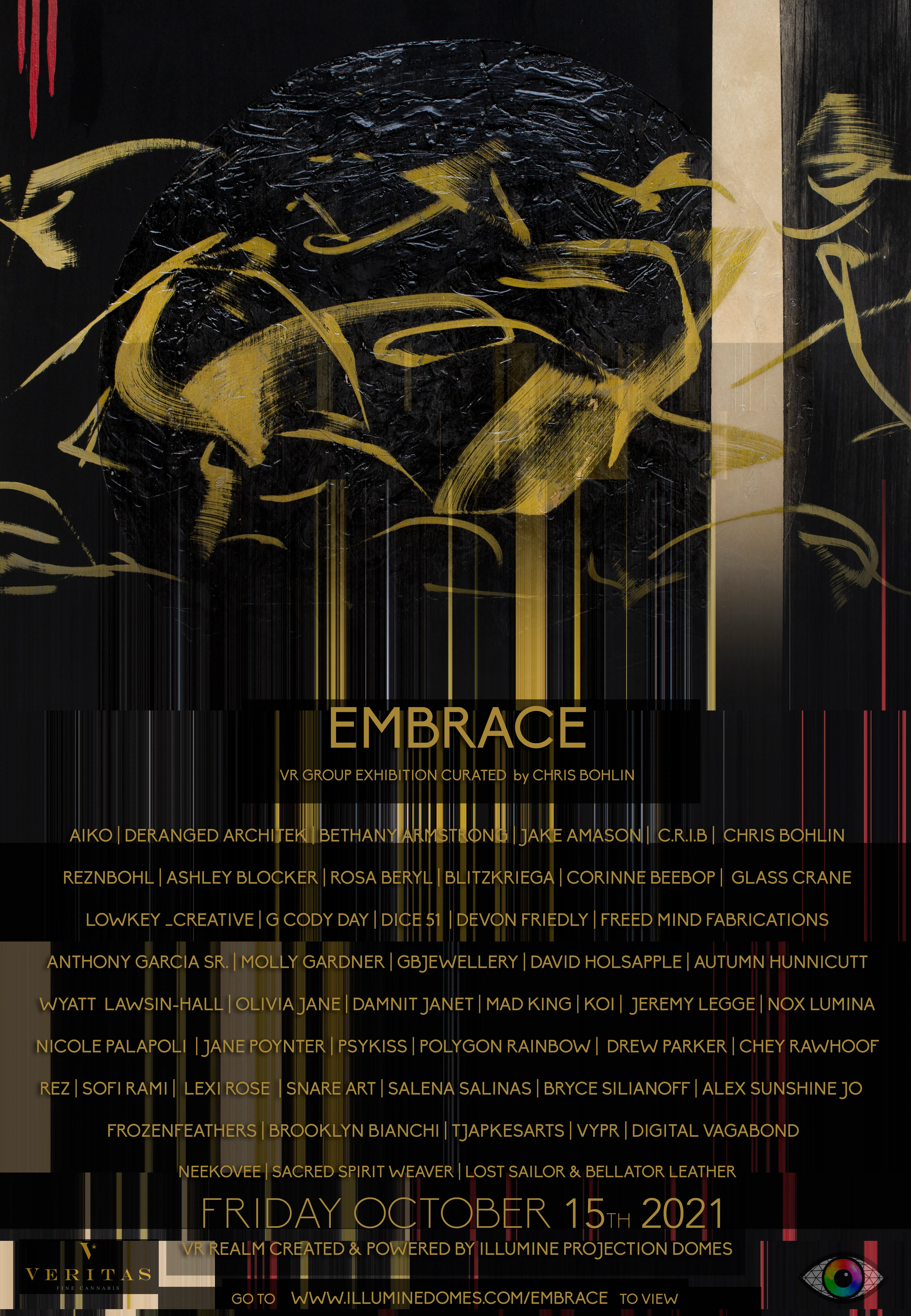

EMBRACE

VR ART EXHIBITION

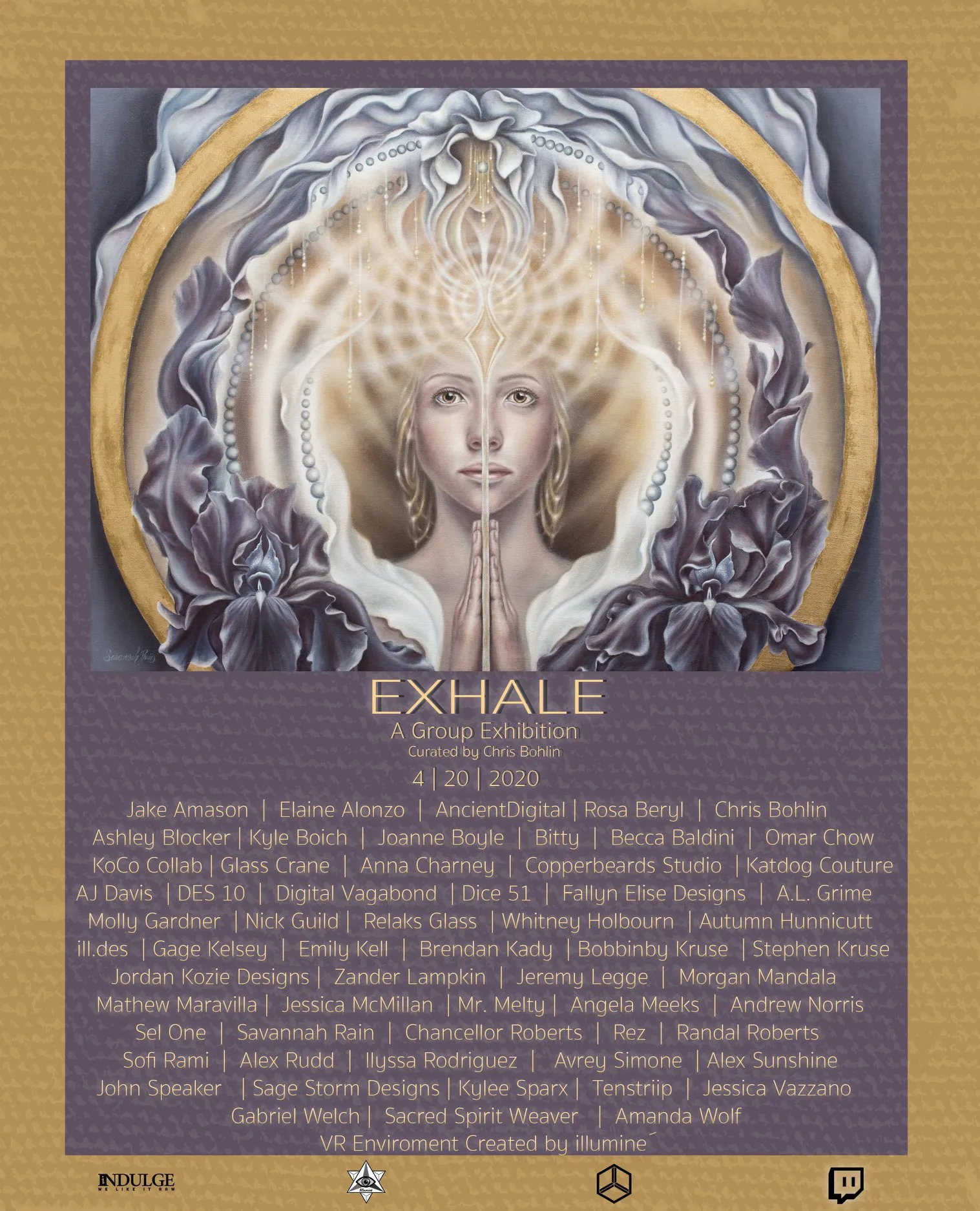

EXHALE

VR ART EXHIBITION

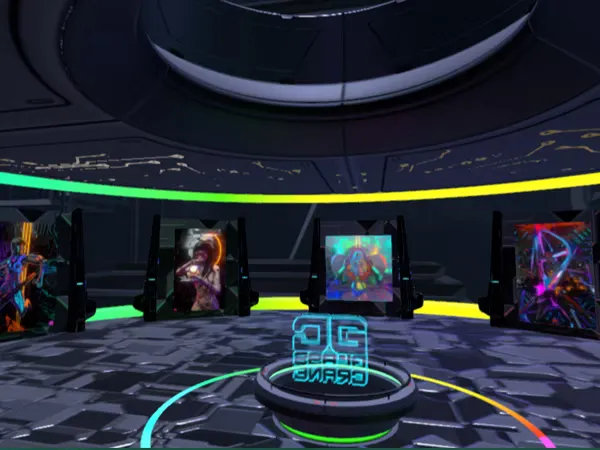

GLASS CRANE VR GALLERY

VR ART GALLERY

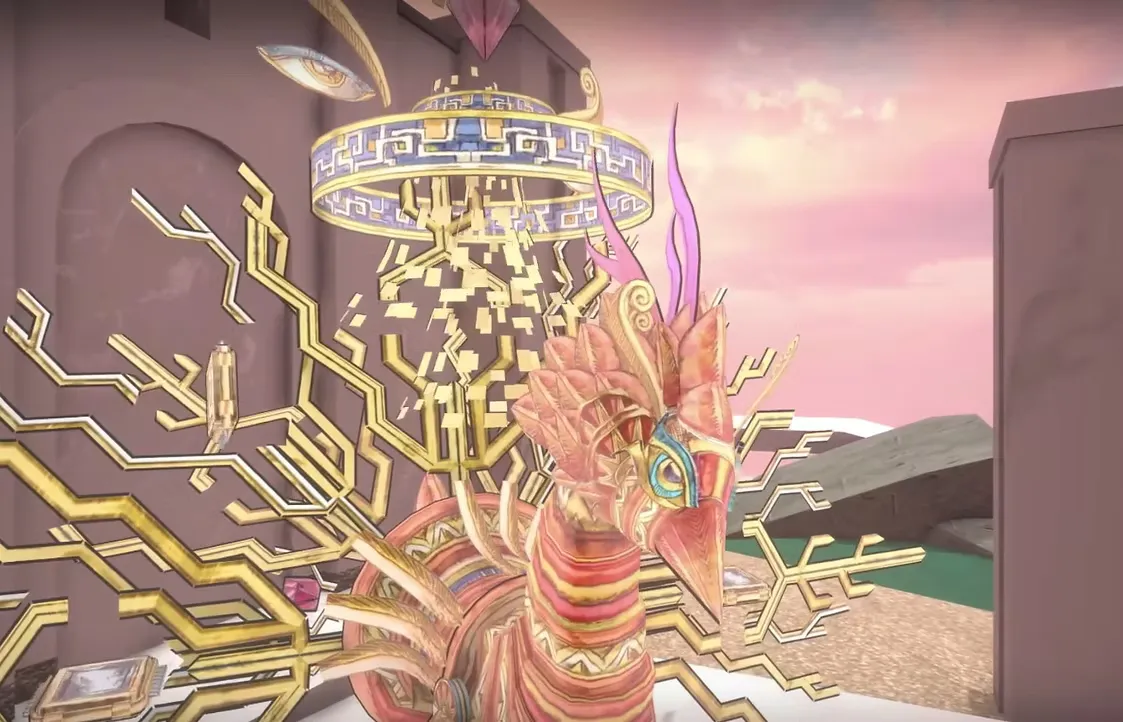

Chris Dyer VR Experience

VIRTUAL REALITY

Galaktic Gang VR World

BRAND IDENTITY

SUPERRARE ATLANTIS

VR ART SHOW

SUPERRARE AETHER

VR ART SHOW

Noiserator

WEB APP

FANTASTIC REALITIES

INTERACTIVE

Interactive Touchscreen Map

INTERACTIVE

DOME PROJECTION SIMULATOR

WEB TOOL

Housing Opportunities Commission

WEB PLATFORM

JMM Interactive Button

INTERACTIVE

Scrap Yard: Innovators of Recycling

INTERACTIVE

Jews In Space

INTERACTIVE

KARAO

WEB APP

ICESat-2 Touchscreen Installation

INTERACTIVE KIOSK

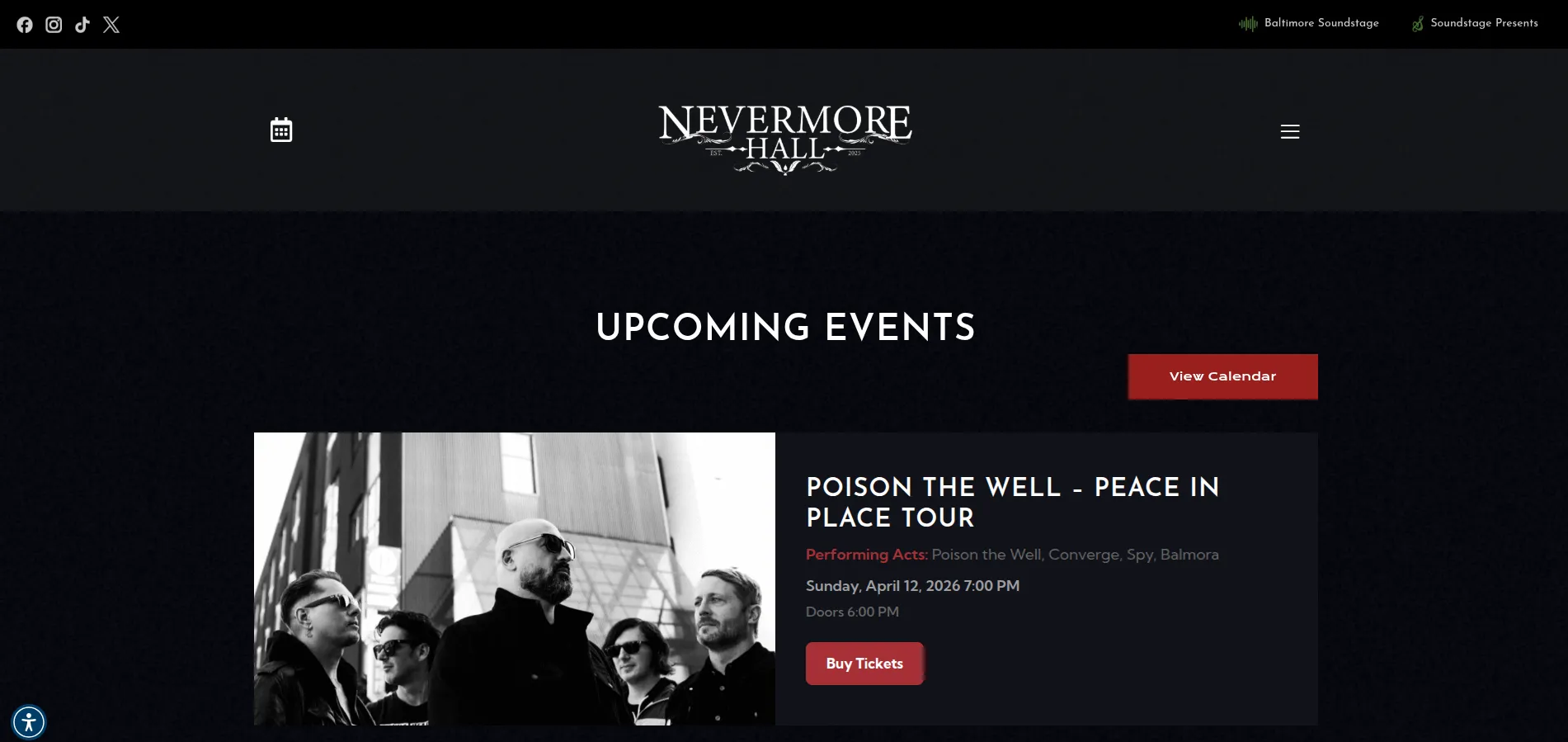

Nevermore Hall

WEBSITE

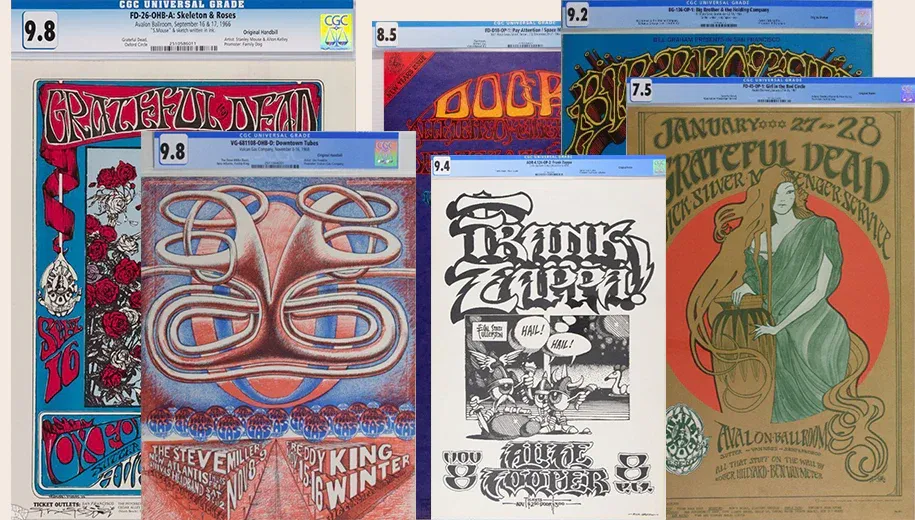

Auction Management System

ASSET MANAGEMENT PLATFORM

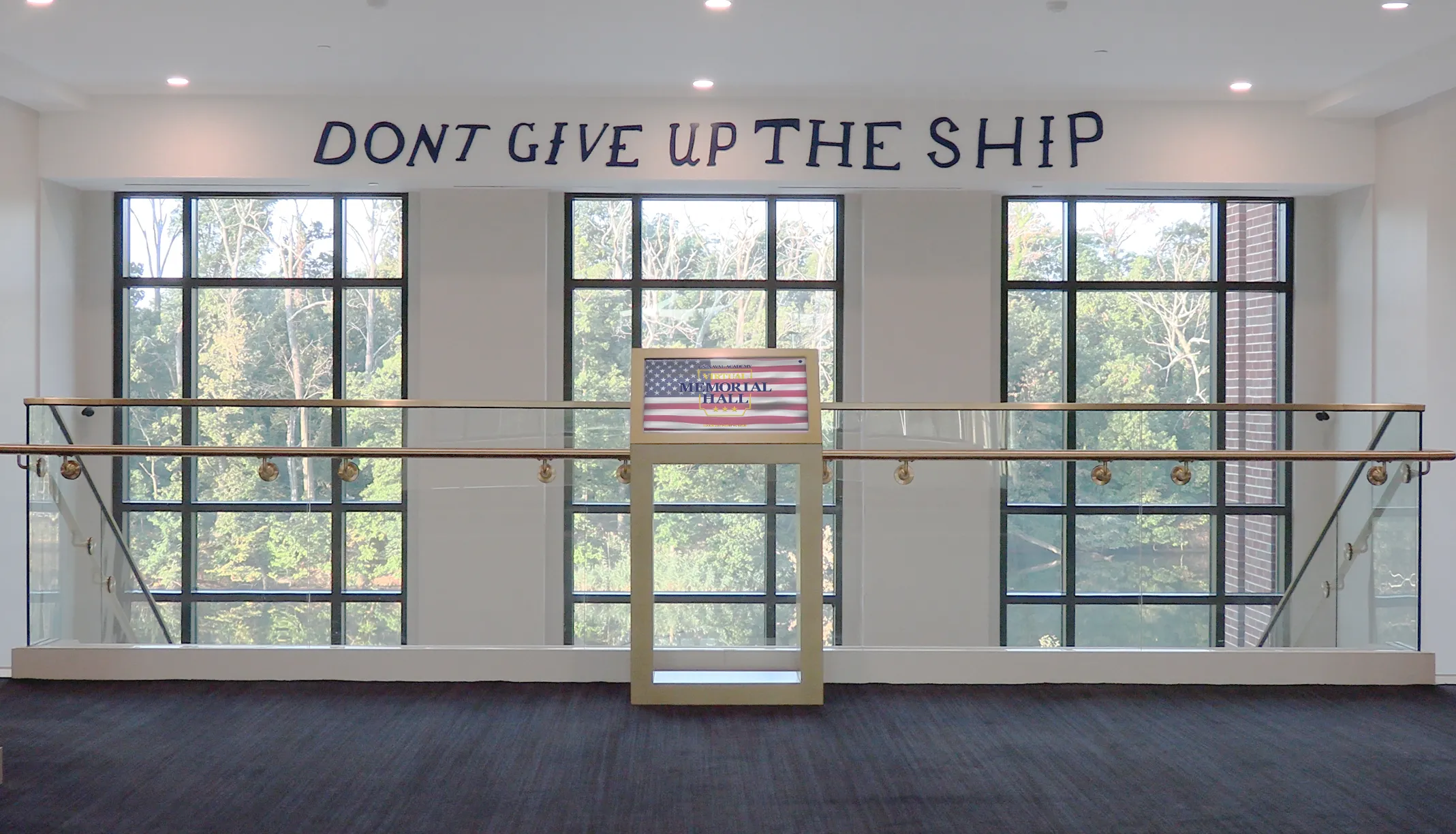

Virtual Memorial Hall

WEB PLATFORM

Frequently Asked Questions

What kinds of software do you build?

What tech stack do you work with?

Do you build for both web and installation hardware?

Can you integrate sensors, LiDAR, or computer vision?

Have you built anything for public institutions?

Do you provide ongoing maintenance?

Have a project in mind?

Let's talk about how our engineering team can bring it to life.